By Sarah Carver, head of digital services at Delta Capita.

Data science and analytics are the keys to growth for any financial services organisation, and over the past decade, there has been an increased realisation in the potential value of data. However, despite the increasing importance of data science, many institutions face challenges due to its complexity.

This complexity is exacerbated by the use of jargon incomprehensible to those unfamiliar with the technology’s intricacies. It can be challenging to bridge this gap and communicate effectively between data teams, business functions, and governing boards to ensure the best possible outcomes. While unsupervised data analysis has its uses, targeting solutions based on business requirements is far more effective.

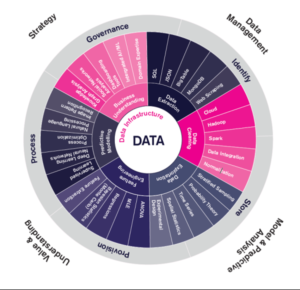

In this article, we take a top-down look at the data landscape. We look at what technology, processes and key challenges there are and how these interact with strategy, data management, and analysis to build value and understanding for your organisation.

Data Management

Data management is about the need to control how, when and where your data is stored. This used to entail large column architecture databases, operated by internal IT teams at large expense. Data is now much more widely available than ever before thanks to decentralisation available through modern database technologies. This allows near instant access to relevant data through secure connection channels. Professional services provide extensive APIs for you to subscribe to data feeds and manage data dependencies.

Another key aspect of data management is security and access control. A lack of security measures has led to significant customer data breaches via hacking and phishing, and large fines have been levied against firms by regulators across the globe.

By using modern data infrastructure, including professional cloud service providers (AWS, Azure, Google), data lakes and secure databases with role-based access controls, organisations can ensure that their own private data assets and their customers’ data is secure from nefarious actors.

Model and Predictive Analysis

Modelling and predictive analysis is used by all types of organisations to manage supply chains, monitor risk, and provide support to customers. This is an essential part of everyday business and most people have a good understanding of these approaches. Where technology is now leading the way with big data, we discussed above some of the challenges in storage and access, we now look at the data itself.

Data is messy! There, I said it. Never have we come across a data set that did not need cleaning in some way. Cleaning data encompasses the methods and actions taken to modify a data set, so it is uniform. For example, dates and timestamps are the canonical examples of cleaning data. Dates are formatted in various ways, like American mm/dd/yyyy and European dd/m/yyyy. These dates must be converted to the same format, otherwise, errors would occur in downstream processing. This issue becomes more challenging when considering timestamps and UTC time zones across global institutions and how to ensure timestamps are corrected when applying time series analysis.

There are many old and new technologies available for modelling and analysing your data. Examples, like Excel, PowerBI and Tableau provide platforms to explore and analyse your data using tables and visuals. Recently, artificial intelligence (AI) is being used to establish previously unknown relationships in data leading to new revenue streams.

Value and Understanding

Building value and understanding from your predictive models and analysis is key to developing actionable insights.

Historically, the realms of interpreting and understanding data to derive key insights was in the hands of business analysts and subject matter experts. Today, there is much more focus on self-service analytics, where the raw data and relevant analytical tools are delivered to the decision makers and managers, allowing them to see the top line figures as before, but also allowing them to deep dive into the data to find the specific reasons for the top line figures, all at the click of a few buttons.

AI, in the form of machine learning and natural language processing, is a recent development that mines huge data stores to find previously unknown patterns and relationships that can guide further analytical work to drive new products and services. Users have also seen significant improvements in terms of customer service due to smart bots that are able to understand and respond to customer queries.

Strategy

Data has become the determining factor in driving innovation and revenue. The firms that can collect, manage, analyse, and understand their data assets will lead their respective sectors – examples are Google, Facebook, and Amazon. These firms have been able to harness their data to build and deliver revolutionary services that have changed the way we live.

However, the changing use cases and technology required can lead to issues if not properly managed. Data governance provides the necessary rigour over the data content as changes occur to the technology, processing and methodology areas associated with the data strategy effort. Good data governance is therefore a necessity in operating a modern, data driven organisation, as required by financial services firms.

Using professional services, like cloud providers, governance can ensure that their policies, rules, and methods are established and adhered to across the organisation. These policies determine uniform data usage access requirements, manipulation, and management.

In Conclusion

In this article, we have discussed the data science and analytics process and developed an understanding of the concepts, technologies, and impacts that this has on institutions’ governance and operations at each stage.

We have shown that challenges exist in dealing with large and ever-growing volumes of data, although if these challenges can be overcome by applying modern technologies and data-driven decision-making, institutions can develop new business revenue, personalise their customer offerings and operate more efficiently.

This article is based on Delta Capita’s Data Wheel.

Subscribe to our newsletter