By Tony Seale, Knowledge Graph Engineer at Tier 1 Bank.

Large Language Models (LLMs) like ChatGPT possess enormous power, stemming from their capability to ingest and compress vast amounts of general information gathered from the web. However, this capability is general rather than tailored to your specific business needs. To effectively utilise these models in a context relevant to your business, it’s essential to provide them with specific information and data related to your sector and niche. After all, if the general LLM knows everything your business knows – what’s the point of your business? But here’s the kicker: if you put garbage in, you get garbage out. Disorganised data will result in vague or even inaccurate answers.

We can state that the quality of your AI offering will directly depend on the quality of the data you input into the LLM. In other words, the quality, connectivity, organisation, and availability of information within your organisation are key factors in determining the success of your main generative AI use cases. However, there is a harsh truth to acknowledge; the data estates of most large organisations are currently very disorganised.

Given that the organisation of our data is directly related to the quality of our LLM’s responses, perhaps our primary AI strategy should actually be to double down on our data strategy!

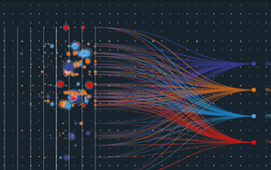

Organising your total data estate is no trivial task, but I believe the great AI acceleration will soon make it necessary. While there are no simple answers, here are some links offering insights into building a semantic data mesh, an architectural blueprint that could help you navigate this complex journey:

- Building Your Own Schema.org: https://lnkd.in/eumPB3Hj

- Building Your Connected Data Catalog: https://lnkd.in/eScj_nfg

- Building Your Data Network: https://lnkd.in/evUNGpwz

Subscribe to our newsletter